Structuring the Unstructured and ScienceIO

Get Out-Of-Pocket in your email

Looking to hire the best talent in healthcare? Check out the OOP Talent Collective - where vetted candidates are looking for their next gig. Learn more here or check it out yourself.

Hire from the Out-Of-Pocket talent collective

Hire from the Out-Of-Pocket talent collectiveAI x RCM Masterclass

.gif)

Healthcare 101 Crash Course

%2520(1).gif)

Featured Jobs

Finance Associate - Spark Advisors

- Spark Advisors helps seniors enroll in Medicare and understand their benefits by monitoring coverage, figuring out the right benefits, and deal with insurance issues. They're hiring a finance associate.

- firsthand is building technology and services to dramatically change the lives of those with serious mental illness who have fallen through the gaps in the safety net. They are hiring a data engineer to build first of its kind infrastructure to empower their peer-led care team.

- J2 Health brings together best in class data and purpose built software to enable healthcare organizations to optimize provider network performance. They're hiring a data scientist.

Looking for a job in health tech? Check out the other awesome healthcare jobs on the job board + give your preferences to get alerted to new postings.

TL:DR

ScienceIO has built an API to structure, de-identify, and annotate unstructured healthcare text data. They’ve trained a generalizable natural language processing (NLP) model on a massive labeled healthcare dataset. The company is making it easier for anyone to use NLP in their workflows, not just data scientists or large companies. The company will face challenges from nuances with dealing with real-world text data, competition, and people choosing human abstraction vs. software. But having more tools to capture value from the trove of text data healthcare continues to generate is going to be extremely important going forward.

This is a sponsored post - you can read more about my rules/thoughts on sponsored posts here. If you’re interested in having a sponsored post done, email nikhil@outofpocket.health.

Company Name - ScienceIO

ScienceIO is a company that’s creating APIs which make it easier to structure unstructured clinical data.

They’re called ScienceIO because they were just as surprised as you that the URL was available and snatched it up. Plus now they build whatever products they want and just put it under the umbrella of improving “science”. It’s pretty genius.

The company was started by Will Manidis and Gaurav Kaushik who originally met at Foundation Medicine. The company recently announced their $8M raise from Section32, Lachy Groom, Packy McCormick, and more.

What pain points do they solve?

This might be news to you but…healthcare data kind of sucks to work with. The reality is that data is coming from a bunch of different sources, and when the data is input it’s not structured to optimize for third-party use cases in most instances.

A lot of that unstructured data tends to be text because instead of trying to get end users to conform to standard fields and structures, we’ve decided to let all the end users do their own thing with no regard for the downstream uses of that data. Plus it’s all in f***ing PDFs still.

As a result, there are a constellation of teams within companies or third-party firms who will go through the unstructured text data and actually structure it themselves for the end use case required. For example, medical coders will take an unstructured clinical note about a patient encounter and then parse that into the appropriate CPT and ICD-10 codes that will generate a bill. Or patient recruitment companies will have people that go through the clinical note on an image/in a patient record to identify which patients will be eligible for certain types of clinical trials. The people whose job is to structure this data are called “abstractors”.

There are a couple of problems with this. For one, abstractors can be very expensive since they need to actually have some medical training in a lot of cases or have to be trained for that task specifically (e.g. knowing the ICD/CPT mapping to be a medical coder). Another is that there is more data generated than abstractors could ever get through realistically. And finally, companies would need to have and dedicate engineering/data team resources to a pretty unsexy problem of data structuring.

To assist with parsing this, companies deploy natural language processing (NLP) models. But historically there have been issues with this:

- There’s a lot of variance in how text data is encoded - clinicians might write things differently, regional or language differences could mess things up, or there could be ambiguity when a pronoun like “this” is used and it’s unclear which noun it’s referencing.

- NLP models were generally specific to parsing a dataset type (e.g. prescription drugs, molecular data, symptoms etc.) where the model was trained to understand the words within the context of that data type. But that could lead to conflicts between the models - if “estrogen receptor” is a word the chemical model would flag “estrogen” and the gene model would flag “estrogen receptor”.

- To resolve that conflict you’d need to map the correct version to the right entity then normalize that between the models for future runs. Those entities need to be stored in some sort of knowledge graph/ontology. However, this needs to be constantly evolving as new entities are created or branch off.

- In order to get all of the above up and running, the amount of engineering resources needed is not insignificant, so the barrier to use is pretty high.

In the last few years, advances in deep learning + availability of training data + commonly agreed upon ontologies has made it easier to build new NLP models in healthcare.

What does the company do?

ScienceIO is an out-of-the-box API that lets developers use their NLP model to parse and identify key information in unstructured text data. You put text data in, get funny colors on those words back. This is not dissimilar from doing drugs.

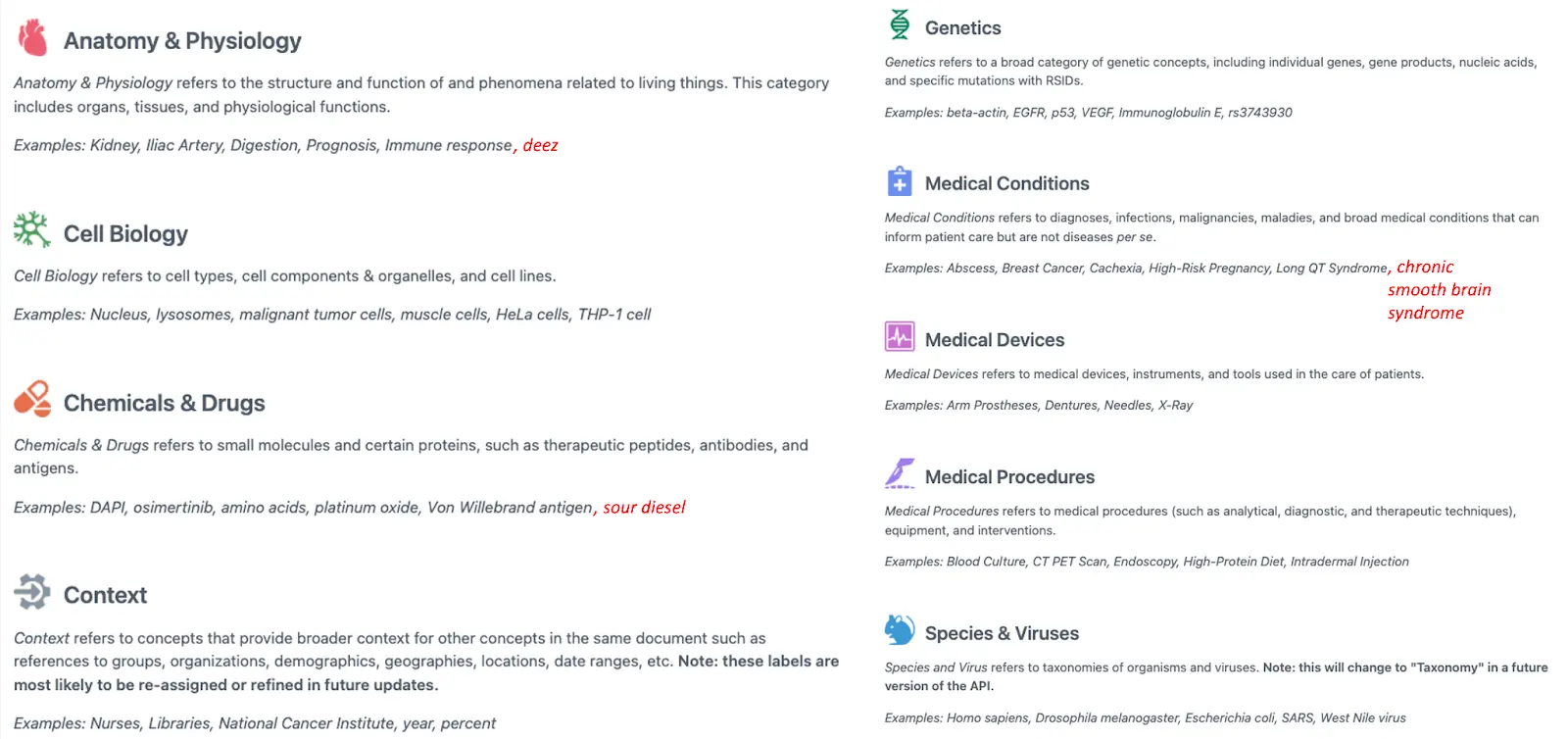

More seriously, the model identifies key terms, the context in which they’re being referred, and then maps those terms to different concepts, which you can see below. It can ingest this data as straight text or can identify and extract elements from PDFs as well.

The company has two separate processes they built to create this API. The first is a high-precision data annotation platform called Tycho that goes through available repositories of data to generate higher accuracy labels. The secret sauce on how they did this was explained to me but I was sworn to secrecy and death if I revealed it, but also, I’m not a data scientist and could barely repeat it back to you if I tried. To date the company now has a dataset of 2.2 billion labels in over 20M documents spanning clinical trial records, physicians notes, and clinical research papers. The second process called Kepler uses that labeled dataset to train a model that can identify and map terms to concepts. I think there’s a third model called Galileo that then trains to fight the church but they haven’t shown it to me.

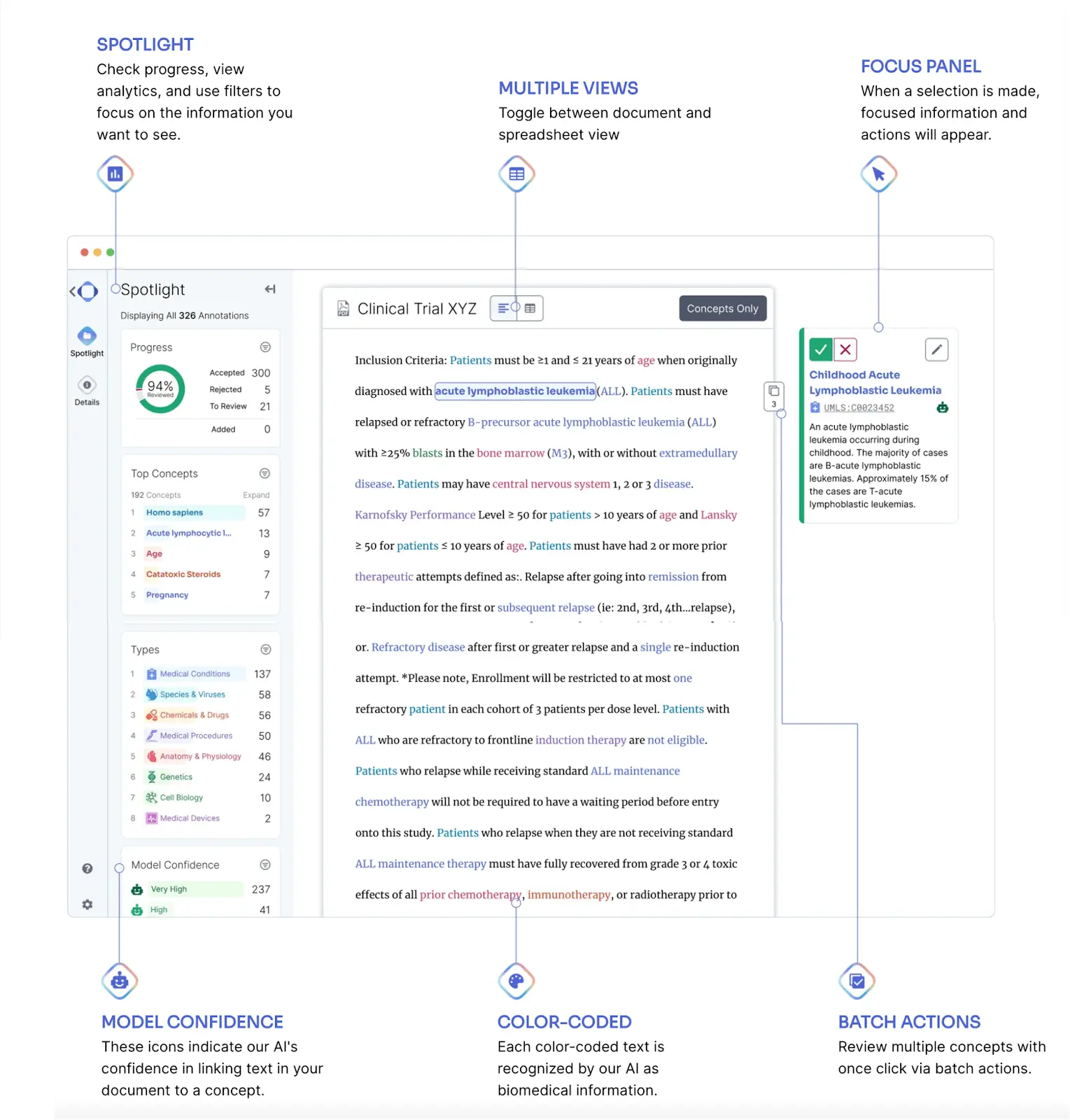

The model is designed to fit into existing workflows where NLP would be useful. The API can be used right out of the box, and it’ll distinguish when it has higher and lower confidence in its suggestions. A separate product called Annotate lets customers overlay the model onto the document and see the different concepts highlighted as well as the confidence score of how that term is getting mapped. Annotate has several workflow tools that can speed up data abstraction like being able to filter by a concept (e.g. medication) and batch annotate concepts that come up several times in a document.

You can actually give the API a spin and mess around with it yourself here, though right now there’s a waitlist. They have all these public API docs you can poke around to see the functionality currently as well.

ScienceIO has two other products coming to market soon. One is the very cool named product called Dojo, which lets companies use the base ScienceIO model but then can train it themselves on new labels or concepts they want to add. The second is the very literal named personal health information redaction product called…PHI redaction. This lets companies send documents/text data, and get the key identifiable health information redacted for cases where you need to anonymize the data (e.g. sharing with third-parties or people with access permissions).

Both of these products are scheduled to come out later this year. As a suite, these four products let companies ingest data and then manipulate it for whatever use case they want.

What is the business model and who is the end user?

The company currently charges per user per month and tiers the different products as you can see on their pricing page.

Here are some different use cases where the company has customers.

Creating data labeling workflows - Right now if you want to generate your own annotations and labels on datasets you own, it’s a chore. You can either hire your own people and build a workflow for them, outsource to third-parties who will do the labeling on your data, or buy datasets from other companies (which usually means other companies are buying this same data too). By combining the PHI redaction tool and the ScienceIO API or Annotate tools, you can set up workflows for anyone to help with data labeling.

Pharma intelligence - Life science teams are frequently trying to find companies, assets, molecules, etc. that are targeting diseases they might be interested in. A lot of this data lives in free text like clinicaltrials.gov, research papers, etc. In order to keep up with this, some customers connect ScienceIO to parse through the massive influx of free-text information they access to highlight key terms like a certain mechanism of action or target disease, etc.

Billing - For companies that need to turn encounter notes (e.g. what happened during a visit) into a set of billable codes to send to insurance, you’ll usually have medical coders look at the note and map it to certain codes which tell the insurance companies what they’re paying for. Not only is this a form of parsing itself, but a lot of back and forth happens between the physician and coding teams to figure out if all the right info is in the note, if things are being mapped to the correct codes, etc. ScienceIO’s API helps parse through this and can proactively highlight things physicians need to document in the encounter to map to the correct codes (and later this year, generate the codes automatically).

Job Openings

ScienceIO is hiring for lots of different roles, including:

- Senior Full Stack Engineer

- Senior Machine Learning Engineer - Deep Learning

And lots of others you can see here.

___

Out-Of-Pocket Take

If you’ve been reading this newsletter, you’ll know I’m a fan of API-first companies with nice documentation that are easy to get started with. Here are a few of the things I like about ScienceIO.

The PDF to structured data pipeline - the unfortunate reality is that valuable data in healthcare is going to be sent in PDF and unstructured text for a long time.

If you ever get a chance to look into how information is shared in healthcare it’s astonishing how much of it is PDFs and free-text fields. Building tools and workflows that can sift through this morass of data is going to be key since the total amount of unstructured data is likely to increase and come from even more places. Coaches notes, patient journals, asynchronous telemedicine, etc. are increasing the production of unstructured text.

ML-Powered Workflows - I’m a big fan of workflows that can meaningfully change thanks to using machine learning in the background. I think ScienceIO’s model can power these workflows, and you already see bits of it in their Annotate product which can do small things like identify a term, recognize it’s in the same concept as other terms later in a document, and batch annotate all of them at once.

According to ScienceIO, these workflow tools result in a 20x faster labeling vs. manual labeling. Though maybe they tested it against people like me who had to cheat to beat Mavis Beacon cause my words per minute is so bad.

Democratization of Deep Learning - The reality is that not everyone is going to have a data science team in-house to build custom NLP algorithms or even stitch together existing models for lots of different custom use cases internally. By making the API accessible to basically anyone that knows python, there can be more experimentation within companies + accessibility to small companies.

That’s how you start seeing things like using NLP tools in different operations team workflows or helping product teams sift through user research. These are all relatively small tasks that would probably be overkill to dedicate a data scientist to, but could still really benefit from an easy-to-get-started NLP API. Plus if you train your own version of the ScienceIO API on things that are specific to your company, you can also use the model in other places within the org. At a couple other companies I worked at we used to use some of these open-source NLP packages to experiment during our hack days, and some of them actually became parts of the product.

--

As with any company, there will always be challenges for a company like this as it comes to market and scales up.

Other Healthcare NLP Tools - There’s competition from other companies in this space that sell their own healthcare NLP software, especially from big tech companies. ScienceIO is specifically dedicated to this one product and sees itself as differentiated because of the unique labeled training dataset they’ve built and therefore how well the product identifies and understands terms (especially in the relevant context which they show up). The company will probably need to build additional tools/models later for certain key high value tasks that show up a lot with customers, but having a good general model to start from should theoretically make that easier.

Variability In Real-World Deployment - No matter how much training data you have, there are lots of idiosyncrasies when it comes to real-world use of any AI/ML models. Especially with areas like unstructured text - there might be shorthand/syntax that a specific physician or company uses. ScienceIO is hoping to mitigate this by giving confidence scores in their predictions and letting humans in the loop confirm/reject areas where specificity is required. Plus, they’re eventually hoping to let companies use their base model and then train it for their custom use case or to handle specific language nuances in the text data they’re working with.

It’s pretty easy to test it out yourself on your own data. You can sign up for access here.

Choosing Humans vs. Software - As I said before, there are lots of people whose job is to structure text data and they have workflows they’ve been doing for a while. There’s going to be some inertia for companies like ScienceIO that want to insert themselves in and change that workflow. In many cases the decision might just be adding more human capital to the decision (which is understood and predictable) vs. redesigning workflows around an NLP API they’ve never used. Startups present a good opportunity right now since many are building their workflows from scratch, but for larger enterprises it might be tougher to wedge in there.

---

ScienceIO wants NLP to become more widely used in healthcare, and I think that’s a great goal to have. We’re missing a ton of value from the data locked in text today, and hopefully new tools like this will help us better capture that value.

Thinkboi out,

Nikhil aka. “You down with NLP, yeah you know me”

Twitter: @nikillinit

Other posts: outofpocket.health/posts

{{sub-form}}

---

If you’re enjoying the newsletter, do me a solid and shoot this over to a friend or healthcare slack channel and tell them to sign up. The line between unemployment and founder of a startup is traction and whether your parents believe you have a job.

Quick interlude - Healthcare 101! Upcoming conferences!

See All Courses →A few quick things - Healthcare 101 starts soon! Let me teach you how healthcare works, cause this mess is confusing.

If you need to look smart in front of healthcare clients, get your team up to speed quickly on how healthcare works, or you’re trying out where in healthcare you want to build…this course is for you.

Also…we have 3 big events coming up.

- Healthcare Data Camp for anyone who touches healthcare data

- A new healthcare software engineering conference in New York on 9/18 (opening soon)

- Ops Knowledgefest, for healthcare ops people in SF on 10/17 (opening soon)

We’re going to launch all of these things very soon so keep an eye out. If these are areas you want to know sponsorship options, we should chat.

Quick Interlude - Data Camp Applications Due Soon!!

See All Courses →Don’t forget…DATA CAMP IS COMING TO YOU THIS JUNE! Applications are due 4/24, but fill it out now before you forget. More details on the site. It’s all breakouts, a small curated group, and focused on tactical things to bring to work on Monday.

We’re also taking our first sponsors now - so if you want to get in front of data engineers/healthcare data pros, let us know.

Quick Interlude - Data Camp Applications Due Soon!!

See All Courses →Don’t forget…DATA CAMP IS COMING TO YOU THIS JUNE! Applications are due 4/24, but fill it out now before you forget. More details on the site. It’s all breakouts, a small curated group, and focused on tactical things to bring to work on Monday.

We’re also taking our first sponsors now - so if you want to get in front of data engineers/healthcare data pros, let us know.